This week, I was speaking about AI at the APHE (The Association for Photographic Education) annual conference. As you might guess, I am a strong advocate for the adoption of new technologies and our responsibility as educators to prepare our students to adopt and adapt to them. AI is no exception. I recorded a video to this effect in assembling my thoughts for the session at the conference:

Changing Expectations

From an assessment perspective, my core argument around AI (and therefore my teaching) is that it has raised the bar of my expectations of my students. This is easiest to understand in connection with a written assignment. If, suitably prompted, AI could generate an essay that would meet the existing criteria, then the criteria have to change. This may mean that an assignment previously acceptable at FHEQ Level 4, now needs to be of the previous standard of L5. Alternatively or simultaneously, the content of the curriculum may change with material previously deemed suitable for L6 being introduced sooner or completely new material being added. It could be argued that it is in the FORMATIVE assessments that the biggest impact will be seen as some may simply not be worth doing and will need to be replaced completely.

The challenge to the student throughout is that they need to add value to their work in ways that AI cannot wholly provide.

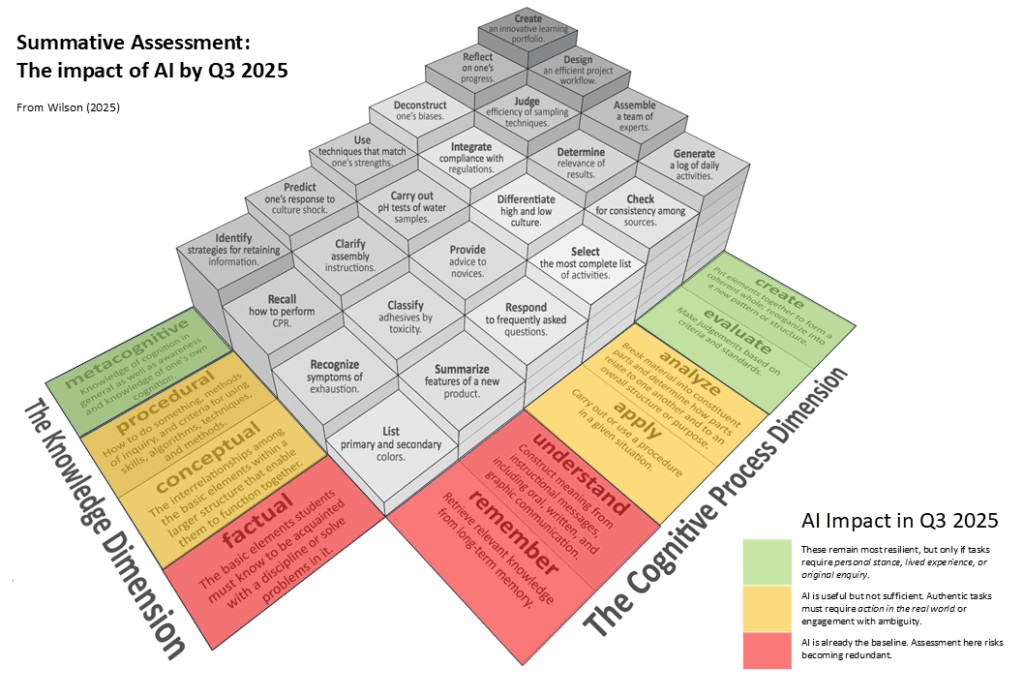

I try to reflect this in terms of Bloom’s Taxonomy (Revised) in the diagram below.

Areas where students have been assessed in the past, such as critical evaluative comparison (‘deconstruct’) and integration across disciplines and contexts (‘integrate’), can no longer be relied upon. The two objectives in the taxonomy that are not made largely redundant by AI are ‘reflection’ and ‘creation’.

Original research and data gathering.

Faced with the impact of AI, tutors across a variety of disciplines appear to be looking at creation in terms of original research. Collecting qualitative or quantitative data, conducting interviews, running experiments, or making field observations remain beyond AI’s direct capability. Students who design, carry out, and interpret such work can show added value.

While AI can simulate research reports, it cannot design, conduct, and evidence empirical enquiry in the real world. For students, this is a clear line of demarcation. Photography students have a number of opportunities to achieve this:

Fieldwork-based image-making.

A project that involves photographing a specific community, event, or location, with an account of how access was negotiated, what challenges arose, and how ethical concerns were addressed. The evidence is both the photographs themselves and the narrative of the encounter.

Primary data on audience reception.

Students might mount a small exhibition or share a digital portfolio, then collect and analyse responses (questionnaires, interviews, or focus groups). Assessors will see not just the images but evidence of systematic data collection and thoughtful interpretation.

Experimental design.

Students can deliberately vary a photographic parameter (lighting, framing, printing method) and gather peer responses to test how meaning shifts. This evidences applied research design skills and a critical stance on method.

Personal voice and reflexivity.

A reflective awareness of how their own standpoint, values, or experiences shape interpretation. AI can mimic reflection, but it lacks authentic lived experience. A student writing about how their community engagement shaped their understanding of social theory is demonstrating something non-replicable.

Assessors know what contrived reflection looks like; they tend to reward the “messy” but authentic voice of a student grappling with experience.

Reflective logs.

Keeping a diary of decisions during a project (e.g., why they chose not to photograph someone, how weather shaped the outcome, how they felt when a subject refused). Assessors value the specificity and emotional tone here – it signals lived experience.

Articulation of personal positionality.

A student might discuss how their own identity (gender, class, culture, etc.) shaped their perspective and how that influenced the reading of their images. Assessors will look for reflexive depth, not just description.

Critical self-evaluation particularly of aesthetics.

A student explaining why an image feels “exploitative,” or why they abandoned a series, demonstrates reflexivity that AI tends to smooth over or rationalise.

Expectations at different levels

This is neither rocket science, nor black-and-white, it is largely about subtle shifts in the standard expected of our SUMMATIVE assessments. I include this table here simply to illustrate the kinds of grading criteria that might be expected for comparison with existing ones. The difference is one of degree. Of course, what is “exceptional metacognitive sophistication” to one assessor may be “a high level” but not “exceptional” to another.